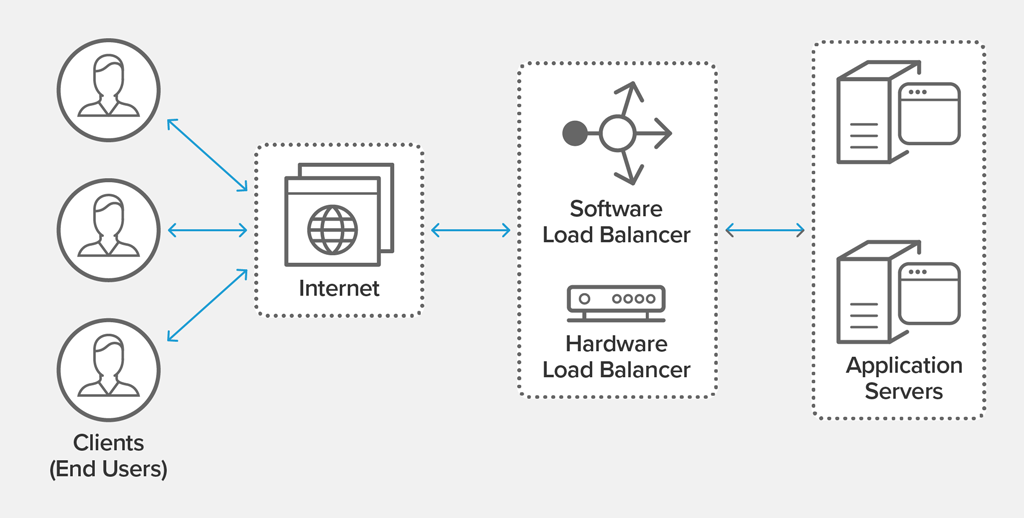

Load balancing is defined as the methodical and efficient distribution of network or application traffic across multiple servers in a server farm. Each load balancer sits between client devices and backend servers, receiving and then distributing incoming requests to any available server capable of fulfilling them.

What are load balancers and how do they work?

A load balancer may be:

- A physical device, a virtualized instance running on specialized hardware or a software process.

- Incorporated into application delivery controllers (ADCs) designed to more broadly improve the performance and security of three-tier web and microservices-based applications, regardless of where they’re hosted.

- Able to leverage many possible load balancing algorithms, including round robin, server response time and the least connection method to distribute traffic in line with current requirements.

Regardless of whether it’s hardware or software, or what algorithm(s) it uses, a load balancer disburses traffic to different web servers in the resource pool to ensure that no single server becomes overworked and subsequently unreliable. Load balancers effectively minimize server response time and maximize throughput.

Indeed, the role of a load balancer is sometimes likened to that of a traffic cop, as it is meant to systematically route requests to the right locations at any given moment, thereby preventing costly bottlenecks and unforeseen incidents. Load balancers should ultimately deliver the performance and security necessary for sustaining complex IT environments, as well as the intricate workflows occurring within them.

Load balancing is the most scalable methodology for handling the multitude of requests from modern multi-application, multi-device workflows. In tandem with platforms that enable seamless access to the numerous different applications, files and desktops within today’s digital workspaces, load balancing supports a more consistent and dependable end-user experience for employees.

Hardware- vs software-based load balancers

Hardware-based load balancers work as follows:

- They are typically high-performance appliances, capable of securely processing multiple gigabits of traffic from various types of applications.

- These appliances may also contain built-in virtualization capabilities, which consolidate numerous virtual load balancer instances on the same hardware.

- That allows for more flexible multi-tenant architectures and full isolation of tenants, among other benefits.

In contrast, software-based load balancers:

- Can fully replace load balancing hardware while delivering analogous functionality and superior flexibility.

- May run on common hypervisors, in containers or as Linux processes with minimal overhead on bare-metal servers and are highly configurable depending on the use cases and technical requirements in question.

- Can save space and reduce hardware expenditures.

L4, L7 and GSLB load balancers, explained

An employee’s day-to-day experience in a digital workspace can be highly variable. Their productivity may fluctuate in response to everything from the security measures on their accounts to the varying performance of the many applications they use. For example, more than 70% of sales personnel regularly struggle to connect data from multiple disparate applications—a task that can be worsened by poor responsiveness due to inadequate load balancing.

In other words, digital workspaces are heavily application driven. As concurrent demand for software-as-a-service (SaaS) applications in particular continues to ramp up, reliably delivering them to end users can become a challenge if proper load balancing isn’t in place. Employees who already struggle to navigate multiple systems, interfaces and security requirements will bear the additional burden of performance slowdowns and outages.

To promote greater consistency and keep up with ever-evolving user demand, server resources must be readily available and load balanced at Layers 4 and/or 7 of the Open Systems Interconnection (OSI) model:

- Layer 4 (L4) load balancers work at the transport level. That means they can make routing decisions based on the TCP or UDP ports that packets use along with their source and destination IP addresses. L4 load balancers perform Network Address Translation but do not inspect the actual contents of each packet.

- Layer 7 (L7) load balancers act at the application level, the highest in the OSI model. They can evaluate a wider range of data than L4 counterparts, including HTTP headers and SSL session IDs, when deciding how to distribute requests across the server farm.

Load balancing is more computationally intensive at L7 than L4, but it can also be more efficient at L7, due to the added context in understanding and processing client requests to servers.

In addition to basic L4 and L7 load balancing, global server load balancing (GSLB) can extend the capabilities of either type across multiple data centers so that large volumes of traffic can be efficiently distributed and so that there will be no degradation of service for the end user.

As applications are increasingly hosted in cloud data centers located in multiple geographies, GSLB enables IT organizations to deliver applications with greater reliability and lower latency to any device or location. Doing so ensures a more consistent experience for end users when they are navigating multiple applications and services in a digital workspace.

What are some of the common load balancing algorithms?

A load balancer, or the ADC that includes it, will follow an algorithm to determine how requests are distributed across the server farm. There are plenty of options in this regard, ranging from the very simple to the very complex.

Round Robin

Round robin is a simple technique for making sure that a virtual server forwards each client request to a different server based on a rotating list. It is easy for load balancers to implement, but does don’t take into account the load already on a server. There is a danger that a server may receive a lot of processor-intensive requests and become overloaded.

Least Connection Method

Whereas round robin does not account for the current load on a server (only its place in the rotation), the least connection method does make this evaluation and, as a result, it usually delivers superior performance. Virtual servers following the least connection method will seek to send requests to the server with the least number of active connections.

Least Response Time Method

More sophisticated than the least connection method, the least response time method relies on the time taken by a server to respond to a health monitoring request. The speed of the response is an indicator of how loaded the server is and the overall expected user experience. Some load balancers will take into account the number of active connections on each server as well.

Least Bandwidth Method

A relatively simple algorithm, the least bandwidth method looks for the server currently serving the least amount of traffic as measured in megabits per second (Mbps).

Least Packets Method

The least packets method selects the service that has received the fewest packets in a given time period.

Hashing Methods

Methods in this category make decisions based on a hash of various data from the incoming packet. This includes connection or header information, such as source/destination IP address, port number, URL or domain name, from the incoming packet.

Custom Load Method

The custom load method enables the load balancer to query the load on individual servers via SNMP. The administrator can define the server load of interest to query – CPU usage, memory and response time – and then combine them to suit their requests.

Why is load balancing necessary?

An ADC with load balancing capabilities helps IT departments ensure scalability and availability of services. Its advanced traffic management functionality can help a business steer requests more efficiently to the correct resources for each end user. An ADC offers many other functions (for example, encryption, authentication and web application firewalling) that can provide a single point of control for securing, managing and monitoring the many applications and services across environments and ensuring the best end-user experience.